Your AI.

Your Machine.

Run powerful language models privately on your Mac. Chat, write code, design apps — all without sending a single byte to the cloud.

Run powerful language models privately on your Mac. Chat, write code, design apps — all without sending a single byte to the cloud.

Powered by open-source models you can trust

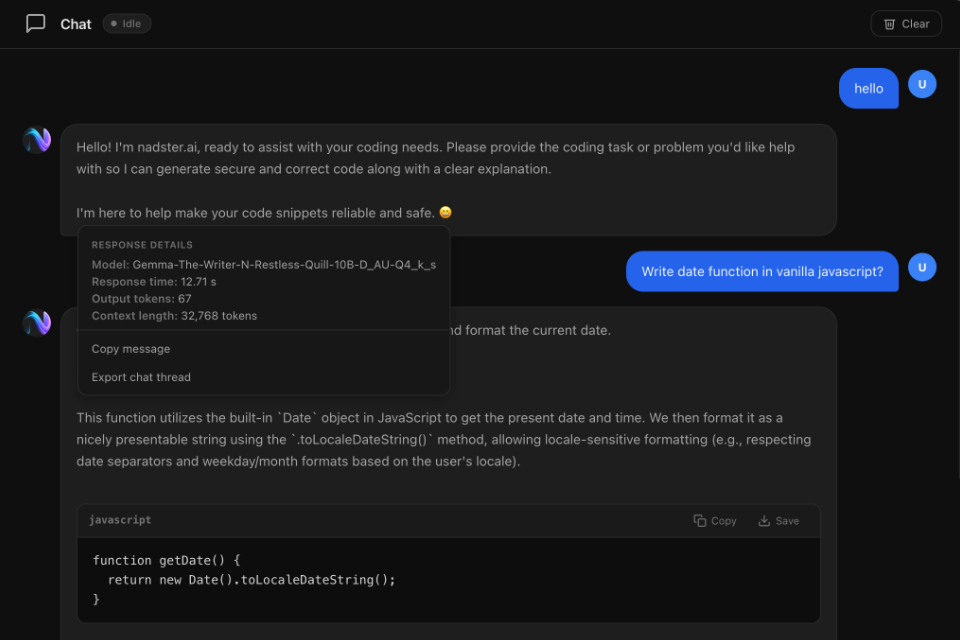

Have natural conversations with a local AI. Ask questions, brainstorm ideas, get instant answers — all private.

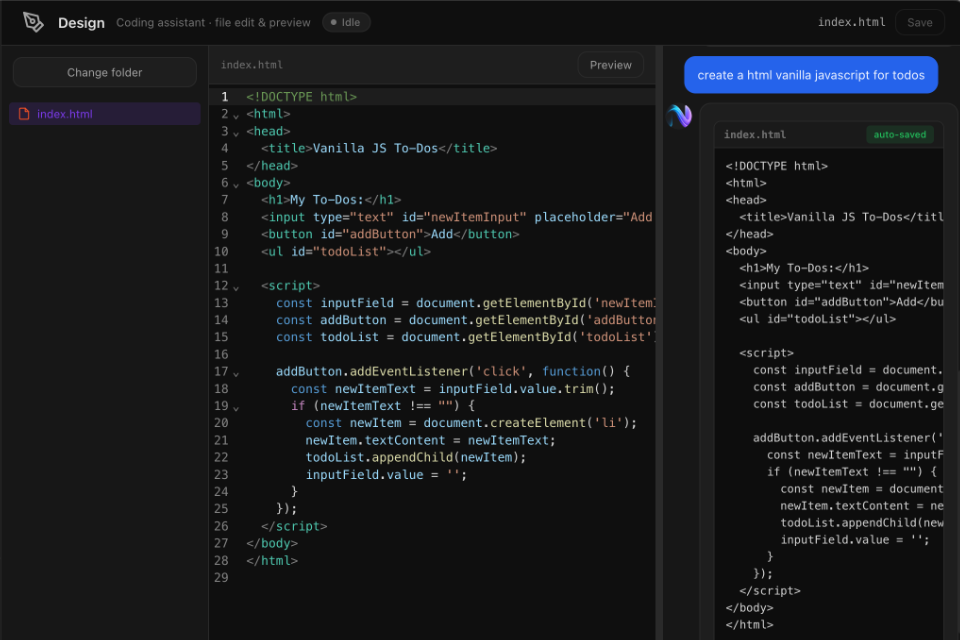

Let AI create and edit files for you. Build apps, write code, and preview results in a built-in editor with live preview.

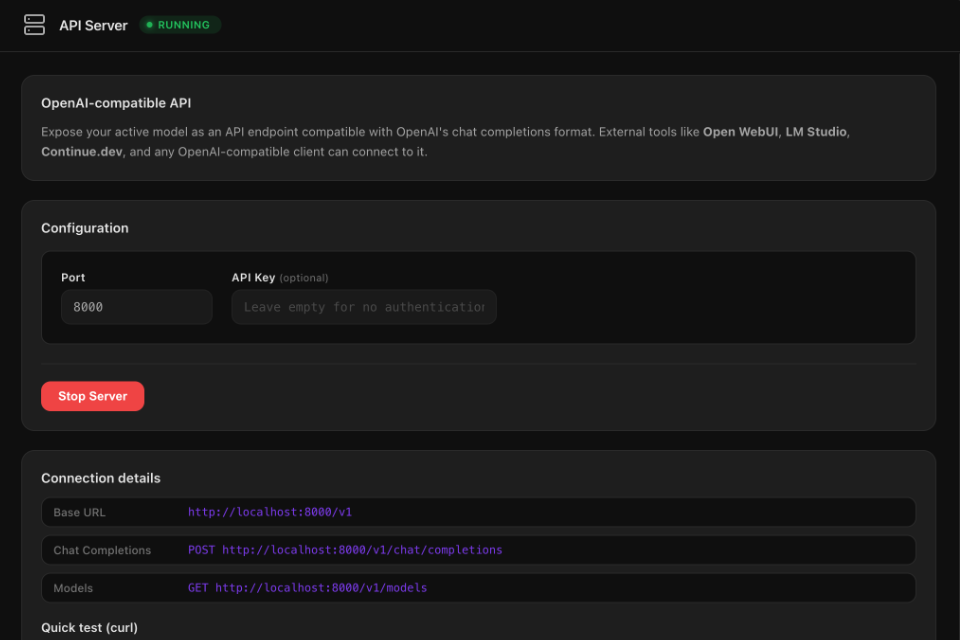

Expose your model as an OpenAI-compatible API. Connect Open WebUI, Continue.dev, or any tool that speaks the OpenAI format.

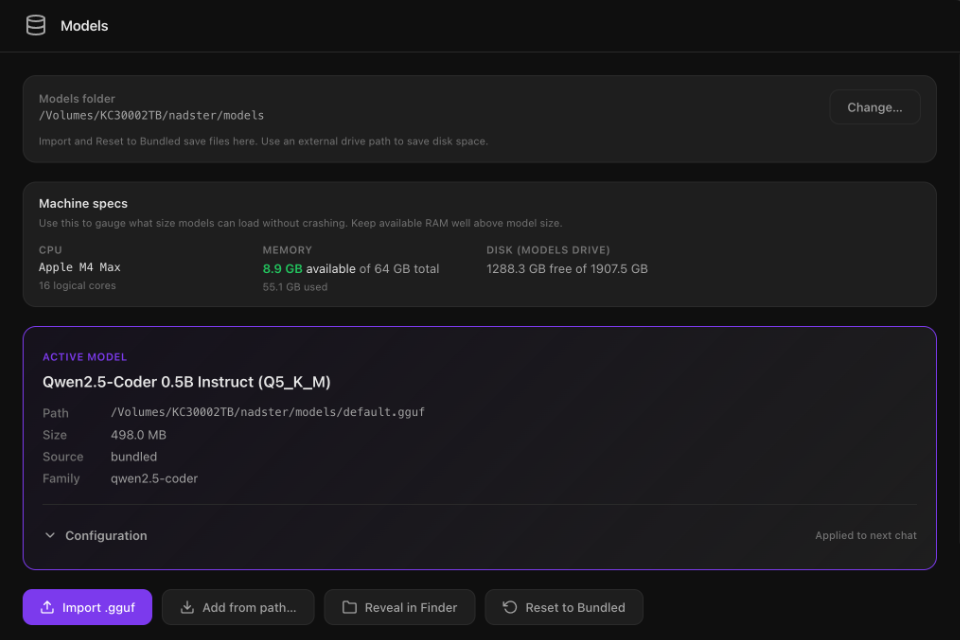

Browse and download optimised models from the built-in store. Filter by size, see RAM requirements, one-click install.

Every prompt, every response stays on your machine. No telemetry, no cloud calls, no data collection. Period.

Optimised for M1/M2/M3/M4 Macs with Metal GPU acceleration. Fast inference even on entry-level hardware.

Sketches, serial monitor, and flash tools for microcontrollers. Prototype and upload code to boards from the app.

Subscribers get ongoing support and free upgrades. Lifetime includes minor updates and half-price major releases.

Store models on an external drive, manage format templates, and control loading options. Full control over where and how models run.

Natural conversation with your local AI assistant.

AI creates and edits code files with live preview.

Browse, download, and manage AI models in one place.

OpenAI-compatible endpoint for external tools.

Pick Basic (£3/mo), Pro (£5/mo), or go Lifetime (£45). Pay securely, then download.

Drag to Applications, launch, and sign in with the account you created at checkout.

Chat, create apps, or connect external tools. Everything stays on your Mac.

Subscriptions include ongoing support + upgrades. Lifetime includes all features with 3 months support.

Prices shown in GBP. Cancel anytime on monthly plans.

For chat + building + local models.

Everything in Basic + developer tools.

Best for long-term use. Limited support.

Major upgrades = significant new version releases (e.g. v2).

Buy Lifetime — £45For teams, volume licensing, custom deployment.

Secure payment via Stripe. Instant access after purchase. Enterprise: we’ll get back to you.

Any GGUF-format model. The built-in store has popular models like Qwen, Llama, Mistral, DeepSeek, Gemma, and Phi — optimised for different RAM sizes. You can also import any GGUF model from Hugging Face.

Only to download models from the store. Once downloaded, everything runs 100% offline on your Mac. No data ever leaves your machine.

Any Mac with Apple Silicon (M1, M2, M3, M4) and at least 8 GB of RAM. More RAM = larger models. Even the base M1 MacBook Air can run smaller models comfortably.

It turns your local model into an OpenAI-compatible API endpoint. Tools like Open WebUI, Continue.dev (VS Code), LM Studio, or any OpenAI client library can connect to it — all running locally.

IoT tools (Pro plan) let you write sketches, use the serial monitor, and flash code to microcontrollers (e.g. Arduino-compatible boards) from inside nadster.ai. Connect a board via USB, edit code, and upload — no separate IDE needed.

We offer monthly subscriptions (Basic £3/mo, Pro £5/mo) with full support and free upgrades, or a one-time Lifetime purchase (£45) with 3 months support and free minor updates. Cancel monthly plans anytime. Enterprise: contact us for volume licensing.

If nadster.ai doesn’t work on your Mac, contact us and we’ll refund you — no questions asked.